On 25 April 2019, we were honored to feature two speakers in our KDD.SG Seminar series and host them in SMU School of Information Systems.

Agenda of the Seminar

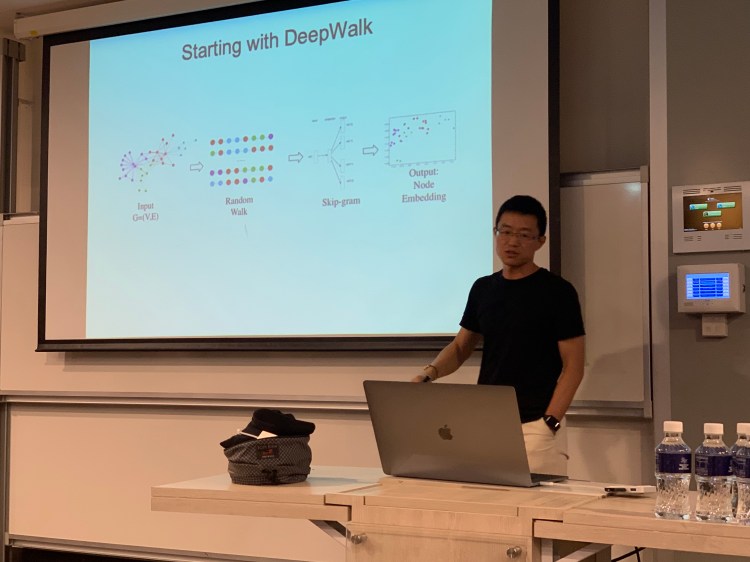

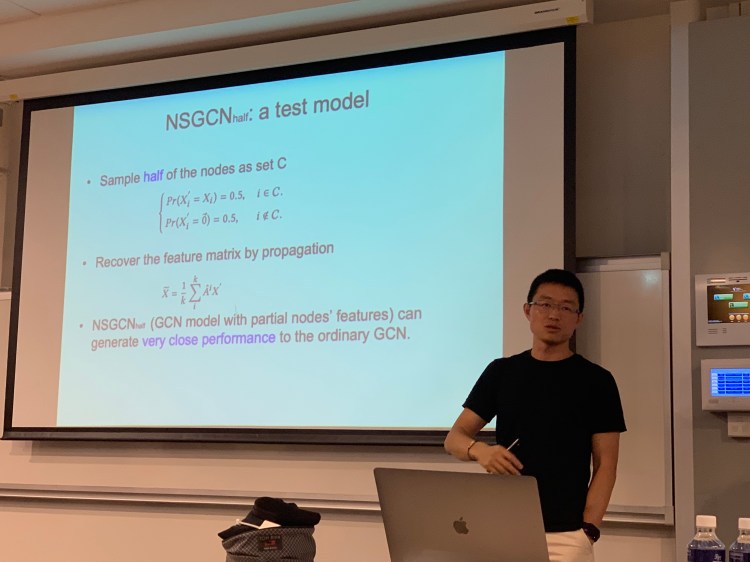

Prof Jie Tang from Tsinghua University summarized several works on using deep learning for deriving graph representations, distilled their fundamental concepts, and described several generalizations and extensions on such models.

Jie describing the process of learning embeddings of nodes from a graph, in this case via employing Skip-gram technique on random walk paths on the graph

Jie discussing how NSGCN, a graph convolutional network with partial nodes’ features, would be much more efficient than ordinary GCN, yet obtaining close performance

In turn, Prof Michalis Vazirgiannis from Ecole Polytechnique described his recent work on using deep learning to derive representations for sets.

Michalis introducing a recent work on using neural networks to learn set representations

Michalis providing an overview of several related works on deep learning for graphs emerging from his lab

The audience was engaged, and the ensuing questions-and-answers were lively with a number of relevant questions. Overall, it was a very informative and inspiring session that certainly amplified our understanding of deep learning and neural networks.

Hi, great job indeed. Thanks, I appreciate your work.

LikeLike